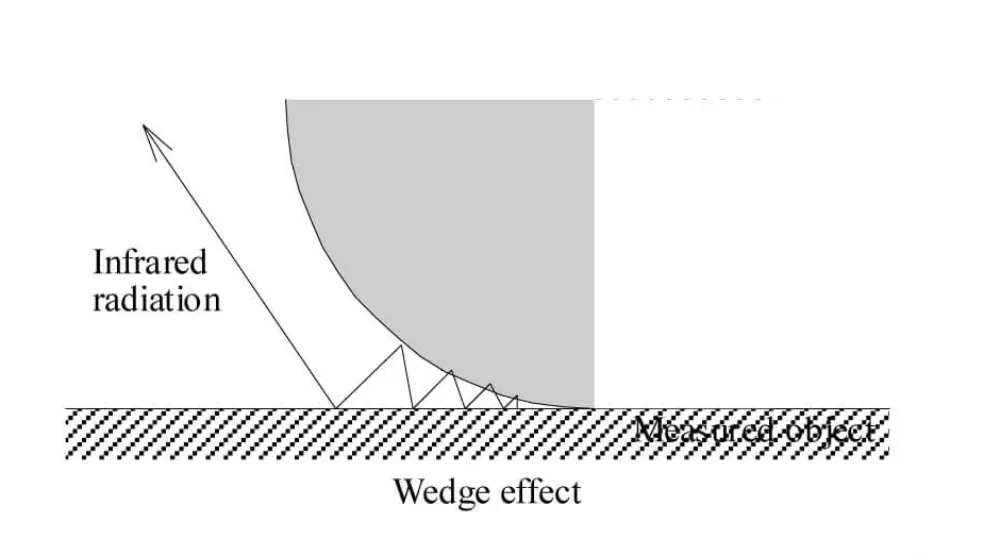

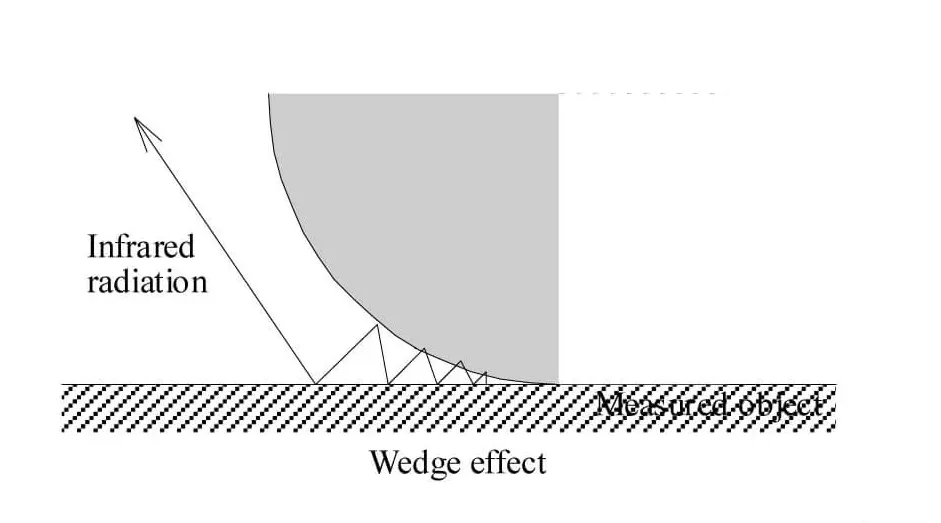

Cavity Effect

The cavity effect in infrared thermography describes the phenomenon where the apparent emissivity of a surface within a cavity—such as a hole, groove, or recess—appears higher than that of the same surface when it is flat or exposed. This increase in apparent emissivity arises from the multiple reflections of infrared radiation within the cavity. When infrared radiation is emitted or reflected inside a cavity, the radiation can bounce off the cavity walls several times before exiting. Each reflection within the cavity enhances the likelihood of radiation being absorbed and re-emitted, effectively increasing the surface’s apparent emissivity.

The higher apparent emissivity observed in a cavity is due to the cumulative effect of these multiple reflections. The combined internal reflections create an environment resembling a nearly perfect blackbody, with an emissivity value approaching 0.998, regardless of the material’s inherent emissivity. This near-blackbody behavior minimizes the influence of changes in material emissivity or background radiation on the measurement. The hole must be at least six times deeper than its diameter to achieve an effective emissivity close to one.

This phenomenon is also known as the wedge effect. By employing the wedge or semi-wedge effect, the emissivity of the measured surface is enhanced. This method only applies when the material naturally forms a cavity or when an artificial cavity, such as a drilled hole or coil is created.

Back to LexiconRecommended Products

Talk to us about your IR Temperature Measurement Requirements

Our Infrared Temperature Measurement experts can help you find the right Optris product for your application.